So far I have been scraping my website for a list of the divs, links and pictures that it contains, however I also want to interact with my site. Back in part 1 I briefly wrote about the GET command that is used when asking for data from a web page. The opposite of this command is the POST command, and it is used to send information to a web page.

On www.geektechstuff.com there is a search box which can be used to receive text from a visitor and search for the text that the visitor has entered into the box.

There are ways to identify the search box as an input method;

- Manually visit the website and try entering text into the box

- Manually visit the website, open a web browsers developer tools and look at the search box’s values:

- Use Python and BeautifulSoup to search the website for input classes:

The Python option takes a few lines of programming to complete:

I have asked Python/BeautifulSoup to find all mentions on ‘input’ and return both the results and also just the value. I think doing it this way helps when looking for certain inputs, especially if the website designer has been kind enough to appropriately name the input values (e.g. Search).

At this point I took a side step and started playing with the Python Requests library and interacting with Wikipedia. The function outlined below fails but I think it is important to understand why it fails. Please do not carry out the wikipedia_logon() function.

Requests allow for data to be sent to a webpage (POST) rather than read from a webpage (GET). To look at using POST I have hit up Wikipedia.

I’m using a logon page on Wikipedia: https://en.m.wikipedia.org/w/index.php?title=Special:UserLogin&returnto=Main+Page and the developer tools within my web browser to view the page headers to see what data is passed when I attempt to login.

A correct login passed the following Form Data to Wikipedia.

Knowing what data the form is expected to POST to the page allows for that data to be put into my Python program.

I added some headers to make my Python program “look” more like a regular browser session by including the user-agent details of the Safari browser running on an Apple Mac.

The wikipedia_logon() function currently returns the cookie details, a response code (200, which means success) and the HTML content of the page.

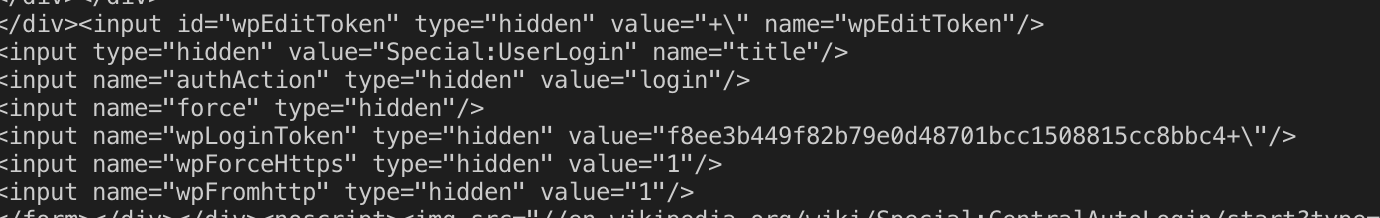

At this point I found a problem. My program is sending the details to the Wikipedia logon page but it does not login. The reason for this lies within a hidden field within the page’s HTML:

The wpLoginToken is hidden, the value changes and it is one of the parameters that the Wikipedia logon is expecting. You may ask, “Why would anyone have this on their site?” and the answer is pretty much to stop bots and hijacking of sessions. Wikipedia does have some cool APIs for bots to interact with so I can see why they would not want bots interacting with their logon pages.

I could create some basic HTML forms to test my program but to me that defeats the purpose as it is building a test to fit the answer. I do think that this little “hidden” input loops very nicely back to my introduction paragraphs on this page though, which is why I have left everything in place (showing the slightly wacky path I have taken with web scraping so far).

You must be logged in to post a comment.