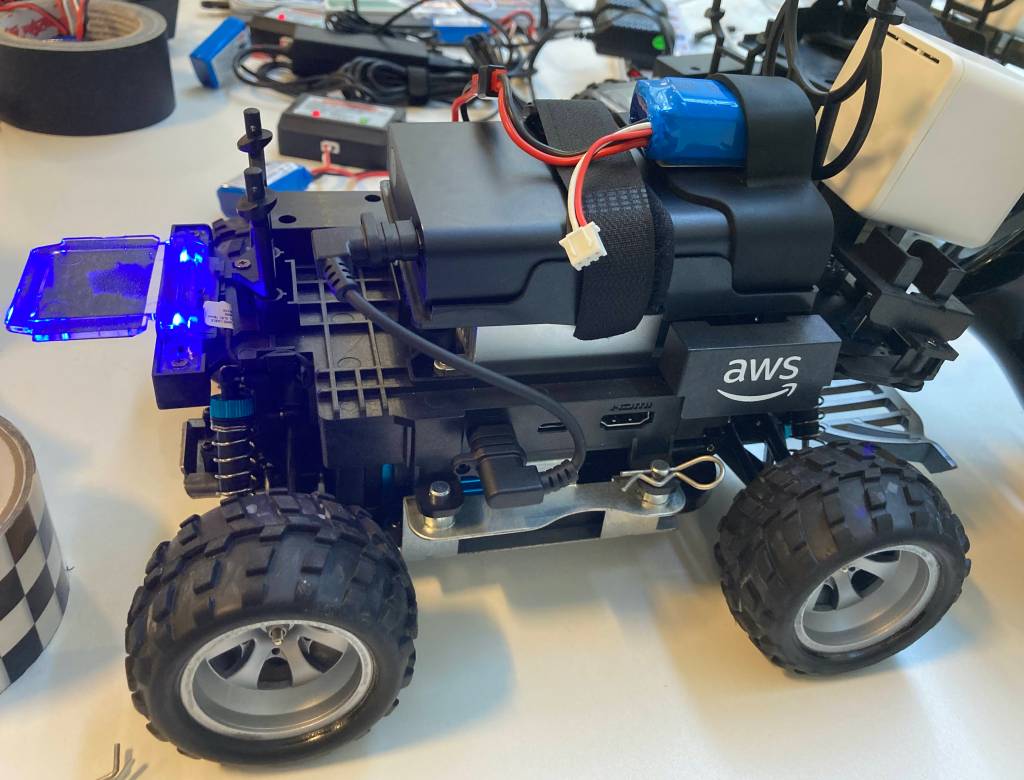

My interest in machine learning has continued to grow and this week I had the opportunity to attend an AWS DeepRacer event. As I’m taking a look at DeepRacer I decided to write this blog post to store my notes and hopefully help me with some of the options.

The aim of DeepRacer is to have a car drive itself around a track in the fastest time possible without the car straying from the track too many times. Unlike devices which are programmed to drive X cm forward and then turn Y degrees, the DeepRacer car learns using reinforcement learning. This learning takes place in a virtual environment and can then be downloaded into a physical car (roughly 1/18th the size of a regular car) to drive around a physical track.

Machine Learning

Supervised Learning

An example of supervised learning is manually training a computer to identify objects using labels, e.g. labelling cats in 1000 photos. The computer would use the label as a teacher and could then identify a cat in a photo. The same process would need to completed to identify other animals (e.g. a dog).

Unsupervised Learning

Unsupervised learning doesn’t use labels (so no teacher) and instead the machine learns on its own. This is where reinforcement learning comes in.

Reinforcement Learning

Reinforcement learning involves programming the machine so that it gets rewards for positive actions and punishments for negative actions. This can be as simple as receiving points whilst driving on a track and losing points when not driving on the track. The machine builds a model which has an environment (the track), an agent (the car), a state (a point in time) and an action (e.g. turn left 45 degrees). The machine then tries various actions at various states in a trial and error fashion to see which receive the most points. Once completed the machine can use that earlier model and run through it again with different actions. Each iteration of the model helps the machine to learn what actions cause rewards and what actions cause punishments.

During each iteration of the model the environment (track) is broken down into steps so that the machine can learn both close rewards (e.g. stay moving forward here) and further away rewards (e.g. need to start turning in a few centre meters).

Isn’t It All Just Python Programming?

The Reinforcement Learning can be broken down into two factors:

Parameters

Which is the Python code written by the creator (i.e. you) to teach the machine what is a positive action and what is a negative action. You add in what you think is important (e.g. stick to the middle of the lane) and then write how this should be rewarded (e.g.stick to the middle of the lane give reward of 1 point, drive in lane give 0.5 point, drive out of lane remove 1 point). There are multiple parameters that can be used, and they can be used in different ways. The parameters are all internal to the model.

| Parameter Name | Type | Syntax | Description |

| all_wheels_on_track | boolean | params[‘all_wheels_on_track’] | If all four wheels are on the track, where track is defined as the road surface including the border lines, then all_wheels_on_track is True. If any wheel is off the track, then all_wheels_on_track is False. Note: If all four wheels are off the track, the car will be reset |

| x | float | params[‘x’] | Returns the x coordinate of the centre of the front axle of the car, in unit meters |

| y | float | params[‘y’] | Returns the y coordinate of the centre of the front axle of the car, in unit meters |

| distance_from_center | float [0, track_width/2] | params[‘distance_from_center’] | Absolute distance from the cente of the track. The centre of the track is determined by the line that links all centre waypoints |

| is_left_of_center | boolean | params[‘is_left_of_center’] | A variable that indicates if the car is to the left of the track’s centre |

| is_reversed | boolean | params[‘is_reversed’] | A variable that indicates whether the car is training in the original direction of the track or the reverse direction of the track |

| heading | float [-180,180] | params[‘heading’] | Returns the heading that the car is facing in degrees. When the car faces the direction of the x-axis increasing (with y constant), then it will return 0. When the car faces the direction of the y-axis increasing (with x constant), then it will return 90. When the car faces the direction of the y-axis decreasing (with x constant), then it will return -90 |

| progress | float[0,100] | params[‘progress’] | Percentage of the track complete. A progress of 100 indicates that the lap is completed |

| steps | integer | params[‘steps’] | Number of steps completed. One step is one state, action, next state, reward tuple |

| speed | float | params[‘speed’] | The desired speed of the car in meters per second. This should match the selected action space. In other words, define this parameter within the limit that you set in the action space |

| steering_angle | float | params[‘steering_angle’] | The desired steering angle of the car in degrees. This should match the selected action space. (In other words, define this parameter within the limit that you set in the action space. Note that positive angles (+) indicate going left, and negative angles (-) indicate going right. This is aligned with 2D geometric processing |

| track_width | float | params[‘track_width’] | The width of the track, in unit meters |

| waypoints | list | params[‘waypoints’] for the full list or params[‘waypoints’][i] for the i-th waypoint | Ordered list of waypoints that are spread around the centre of the track, with each item in the list being the (x, y) coordinate of the waypoint. The list starts at zero |

| closest_waypoints | integer | params[‘closest_waypoints’][0] or params[‘closest_waypoints’][1] | Returns a list containing the nearest previous waypoint index, and the nearest next waypoint index. params[‘closest_waypoints’][0] returns the nearest previous waypoint index, and params[‘closest_waypoints’][1] returns the nearest next waypoint index |

HyperParameters

These are external to the model and can be the secret sauce of turning a good model (e.g. 25 seconds around a track) into a great model (e.g. 8 seconds around a track). However there is not a “go to” setting to get the best result, it can be down to tweaking / tuning the hyperparameters to try and get a better result than the default settings give. A warning here as well that tweaking / tuning can lead to better results but can also cause convergence issues – but that is part of the fun of learning what is best for the model.

The hyperparameters are:

Batchsize

The batch size is how much data (images) the model should work through between each update. The images are randomly selected from the model’s recent experience.

Epoch

The epoch is how many times the batch size data is looped through between each update.

Learning Rate

This controls the speed the machine learns at. A small learning rate means that it takes longer to learn, a large learning rate can cause the machine to fail to reach the optimal solution.

Exploration

Should the model use exploitation, where decisions are made on the data available at the time (e.g. stick to safe path) or should the model use exploration, where additional information is gathered (e.g. maybe a more rewarding path) but also chance more mistakes will be made.

Entropy

More entropy means that the machine will carry out more random actions, which is good for exploration. Less entropy means the machine will carry out less random actions, which is good for exploitation.

Discount Factor

The discount factor helps the model to learn about taking future actions into effect (or not into effect). A smaller discount factor and the model will consider instant rewards more. A larger discount factor and the model will consider later rewards more.

Loss Type

DeepRacer offers two types of loss, Huber Loss and Mean Squared Error Loss. Both behave very similar for small updates to the model but the Huber Loss offers smaller incremental updates to Mean Squared Error Loss when dealing with larger updates. However Mean Squared Error Loss can cause convergence problems with larger updates.

Episodes

How many times the model should go through a loop during its iteration.

How DeepRacer teaches Machine Learning

DeepRacer is built on top of two AWS products:

SageMaker which trains the models using the reinforcement learning, and RoboMaker which builds the virtual space to run the models in using the Gazebo physics engine (simulated gravity, motion etc). Although DeepRacer is fun it teaches how a machine learning model requires extensive testing before being used and how models can be fine tuned to get different results.

This same type of reinforcement learning could be used to teach a machine to trade stocks (sell when price high, buy when price low) or manage staff rotas (increase staffing when busy, lower staffing levels when quiet) by giving the machine older data to train with and reinforcing actions.